When the Report Becomes the System of Record

This is the fourth post in our learning series on Modern Data Governance, leading up to a free webinar on April 2nd. Click here for webinar registration, and here to read the first post on data standards.

At many universities, the most authoritative source of institutional data is not the student information system, the data warehouse, or even the institutional research office.

It’s a dashboard. Or a spreadsheet. Or sometimes, a PDF circulated to a committee.

This situation rarely happens intentionally. Instead, it emerges gradually as institutions build reporting processes, dashboards, and analytical workflows around their operational systems. Over time, the report stops being a reflection of the system of record and begins to function as the system of record itself.

One way to tell this is happening is hearing a question like this:

"Why does the SIS say something different than the dashboard?"

How Reports Become Systems

Reports become systems of record not because people intend them to, but because they accumulate trust. A dashboard becomes the version of the truth that leadership uses. A spreadsheet becomes the dataset that analysts reuse. A PDF presented to Senate becomes the official number referenced in future decisions.

At that point, the report is no longer just a representation of institutional data. It has become an artifact that the institution relies upon for decision-making.

This happens in many ways:

- A Tableau dashboard becomes the number everyone references for enrollment.

- An Excel extract is reused for months because it contains the "clean" version of the data.

- A PDF circulated to Senate becomes the official record of a metric, even if later analysis shows the number may not have been calculated correctly.

- Analysts begin validating numbers in operational systems against dashboards rather than the other way around.

None of these behaviours are irrational. They are simply the natural outcome of institutions trying to work with complex data environments.

Why Metrics End up in Living Reports

There are several reasons institutions drift into a state where reports function as systems of record:

- Operational systems are not designed for analysis. Student information systems, HR systems, and financial systems are built to run transactions, not to answer analytical questions. Extracting meaningful insights from these systems can be difficult, slow, or dependent on specialized technical knowledge (this is part of why data standards like MortarCAPS can be so helpful).

- Business logic migrates into reports. The rules that determine how metrics are calculated — such as how to define "enrolled students," how to group courses together for reporting, or which programs belong to a particular faculty — are frequently embedded directly inside dashboard calculations or spreadsheet formulas. In many institutions, the only place these definitions exist is inside the report itself.

- For example, an institution may define "enrolled headcount" using a complex set of rules that determine which students count in which term. Those rules might live inside a Tableau calculated field or inside an Excel formula used by an analyst. Similarly, grouping courses together for reporting purposes, such as combining multiple course sections into a single reporting category, may exist only within a reporting workbook. When this happens, the report is no longer simply visualizing data; it is performing essential data modelling functions that are invisible to the broader institution.

- Reports gain institutional authority. If a particular dashboard or report is used regularly by senior leadership, it quickly becomes the trusted reference point for numbers across the organization. Over time it stops being just one view of the data and becomes the number people reference. When those numbers are then presented in governance settings — such as a Senate presentation, committee report, or annual report — they become embedded in institutional memory. At that point the number can become extremely difficult to change later, even if new analysis reveals that it was calculated incorrectly, because the institution has already formally recorded and referenced it.

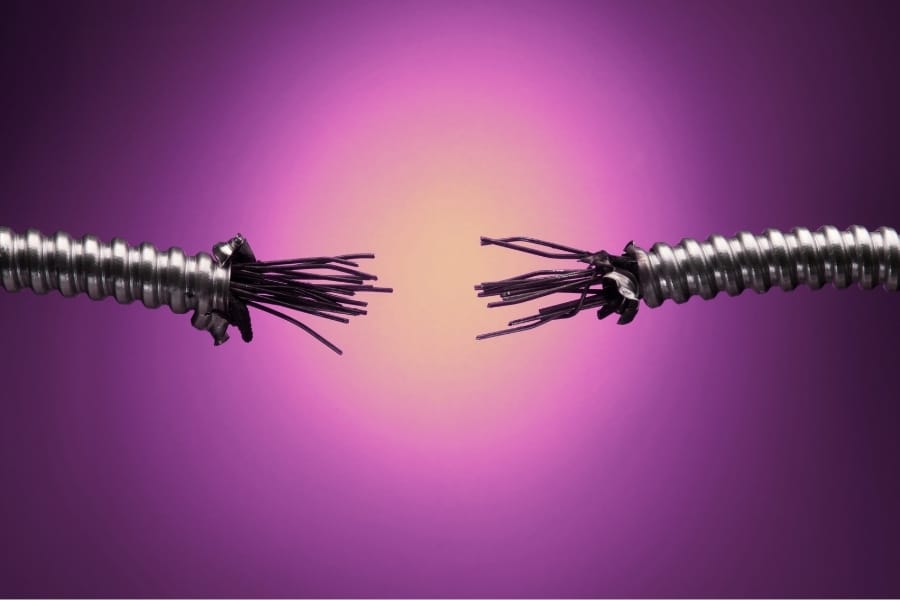

The Architecture Inversion

When reports become systems of record, the institutional data architecture effectively inverts itself.

The architecture institutions believe they have typically looks like this:

System of Record → Data Warehouse → Reports

In this model, operational systems hold the authoritative data, the warehouse prepares it for analysis, and reports visualize the results.

But in practice, many institutions operate closer to something like this:

System of Record → Warehouse → Report → Spreadsheet → Presentation → Decision

At this point, the report layer has effectively become the semantic layer of the institution. It is where definitions live, where transformations occur, and where numbers are trusted.

The Risks

When reports function as systems of record, several risks emerge:

- Version drift. Multiple extracts of the same dataset begin to circulate, each representing slightly different points in time or slightly different definitions.

- Loss of data lineage. When a number appears in a dashboard, a spreadsheet, and a presentation deck, it can become unclear which transformation steps produced it.

- Business logic embedded in fragile places. A change to a dashboard calculation or spreadsheet formula can alter institutional metrics without anyone realizing the broader implications.

- Decision-making disconnected from underlying systems. Leaders may believe they are making decisions based on authoritative data, when in fact they are relying on artifacts that were created for reporting purposes.

Reconnecting Reports to Systems

None of this means that dashboards, spreadsheets, or reports are inherently problematic. In fact, they are essential tools for making data accessible and understandable.

The challenge arises when the logic that defines institutional metrics lives only inside those artifacts.

To avoid this architecture inversion, institutions need to make metric definitions explicit and move critical transformations closer to the data layer. They also need better visibility into how numbers move through their analytical environments.

This is where practices like data lineage and modern data governance become important. By mapping how data flows from operational systems through warehouses and into reports, institutions can better understand where important definitions live and where risks may exist.

In my previous post on shadow systems, I discussed how unofficial data environments often emerge to fill gaps in institutional systems. The same dynamic is at play here. Reports become systems not because institutions design them that way, but because people need reliable numbers to do their work.

The goal is not to eliminate reports, but to ensure that the logic behind them is visible, governed, and connected back to the systems that hold the institution's data.

When that happens, reports return to their proper role: helping institutions understand their data rather than quietly becoming the system of record themselves.

Webinar: Modern Data Governance is Live

Date: April 2, 2026

Time: 10:00am (PT)

Presented by: Andrew Drinkwater

Register here: Teams Webinar

If you're a data governance committee member, data steward, Registrar, IT leader, Dean, or manager interested in taking a modern approach to data governance, this webinar is for you.

All registrants will receive a free Active Data Governance Self-Assessment tool and Identity Lifecycle Mapping worksheet. By working through the assessment and worksheet (alongside reading this series), you'll not only have an understanding of where on the scale of static-to-live governance your institution is, but what gaps exist in a process map, and where shadow datasets and processes might exist. These sheets not only help you understand where your challenges are, but can give you the launch pad to take your concern back to your governance or leadership team.

In the webinar, Andrew will cover how static data dictionaries and handbooks decline in accuracy over time, and how live metadata increases accuracy in the long-term and makes your institution action-ready. We'll talk about efficiency, lineage mapping, why visual mapping data flows matters, and how data governance is key to scaling up your information technology and institutional research infrastructure. We'll show practical examples of how metadata management is used by IR, IT, HR, and/or Registration offices. Andrew will also take questions from the attendees at the end of the webinar.

The webinar will be recorded if you are unable to attend in person.